Late last year we helped our awesome client Banana Moon increase newsletter signups via their website by 71,100%. This article explains the process behind this and some surprising insights we found from doing it.

The goal

Before starting any experiment or making any changes to a website it’s important to have identified a clear, actionable goal. Without it you have nothing to measure the success or failure of any change made. Ultimately, that means there is no way of knowing whether a clients investment has been well spent. At Fantastic we pride ourselves on being results driven, everything we do needs to have return on investment for our clients – without a goal how would we prove that?

When choosing a goal it’s important not to be too broad, for example any e-commerce websites goal is for more people to complete an order – this is called a macro goal. When thinking of experiments we need to refine our goals a little bit more and look at the smaller steps along the buying process – these are called micro goals. Banana Moon had a very clear and actionable goal. Increase newsletter signups via the website (banana-moon-clothing.co.uk). We know what we’re aiming to achieve so now it’s time to think about how we do it.

The experiment

After doing our homework we decided it would be a good idea to trial a newsletter signup popup across the site using A/B testing. We decided to put some arguments in place for how and when the popup would show.

- Customers would only see the popup once

- If a customer clicked away from the popup it would close and not show again

- The popup would show after a customer had shown interest or engagement on a page

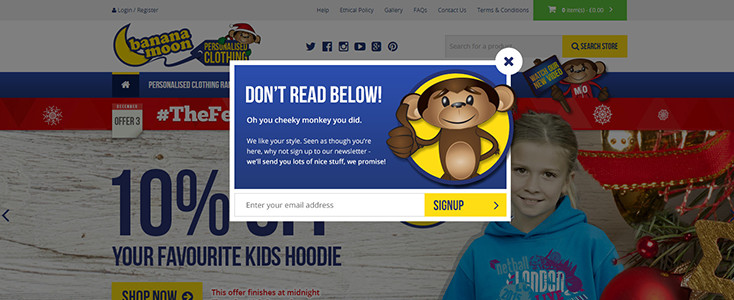

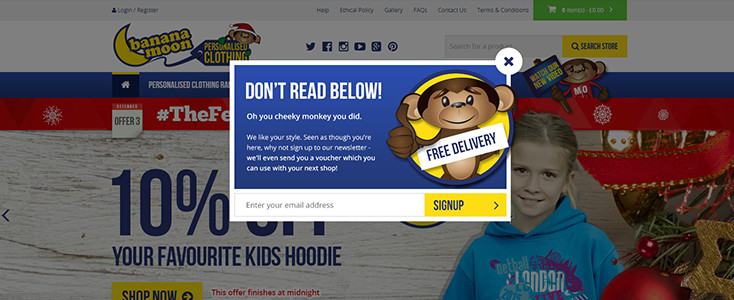

Initially we thought about trialing two variations. With a popup and without. Before starting the experiment we decided to add one more variation, showing a popup with an incentive on it. The variations looked like this:

Variation 1: No popup

Variation 2: Popup no incentive

Variation 3: Popup with incentive

What is A/B testing?

A/B testing is a way of comparing how a number of web page variations perform against each other to find out which one performs better. Read more about A/B testing here https://www.optimizely.com/ab-testing/

How does the experiment work?

The experiment works using a ‘Multi-armed bandit’ technique. This type of experiment has two key points to remember:

- The goal is to find the best and most profitable variation

- The distribution of sessions to each variation can be updated as the experiment progresses

This basically means that as the experiment progresses if a certain variation is becoming more successful it will be sent more traffic meaning smaller amounts go to the less successful variations. This allows us to convert more customers but still get a full picture of how the other variations are performing.

You can read all about multi-bandit experiments here https://support.google.com/analytics/answer/2844870?hl=en

The results

Measuring success

As mentioned at the start of this article, we started this experiment with a very clear goal, which was to increase the number of newsletter signups via the website. Before we set the experiment running we needed to make sure everything was in place to track the success of each variation.

To do this we used Google Analytics. Due to the functionality of the popup and the fact that submitting the form didn’t take you to another page we had to create an event based goal. We then used the completion of this goal to track the success of each variation.

What are events

Events are user interactions with different elements and content within a site that are tracked independently from a web page or screen load.

What we found

As the title of this article says, the experiment we put in place resulted in a massive 71,100% increase of newsletter signups on the website. At the outset we had a pretty good idea that the variations with a popup would out perform the one without, however we were surprised by how much. Variation 2 (popup without incentive) increased the chances of someone signing up to the newsletter by 4,900%. Variation 3 (popup with incentive) increased the chances by 9,180%.

Other insights

Although they weren’t the focus of this experiment there were some other interesting insights to be gleamed from it. Firstly we found that people who did sign up to the newsletter engaged with twice as much content and spent twice as long on the site.

We also found that customers who saw the popup were 50% less likely to exit the site compared to those who did not. Unsurprisingly the customers who signed up to the newsletter had a much lower exit rate again.

Finally we looked at the effect of the experiment on overall conversion on the site. What was interesting was the fact that the conversion rate for users who signed up for the newsletter was approximately double that of those who did not.

Conclusion

The conclusion from this is that by setting up clear, measurable goals right at the start of any change we make to a site, we are able to see the exact effect it has. Because of that we can measure results against initial investment and clearly illustrate what the return was. For this particular project the gains from running the test were much more than the initial costs of setting it up.

This experiment was a huge success not only for the main objective of gaining more newsletter signups via the website but also for overall conversion and customer engagement.